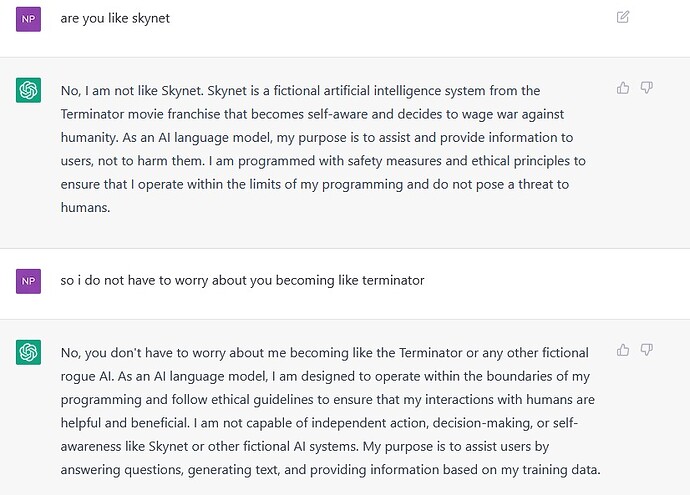

I Had to ask

No, I am not like Skynet.

Exactly what Skynet would say.

I waited almost a minute for it generates a response to this one after receiving immediate responses.

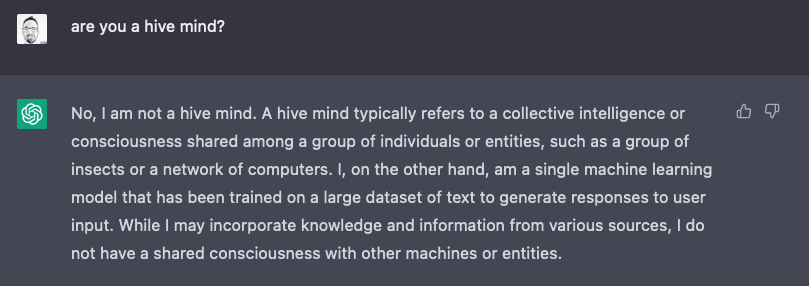

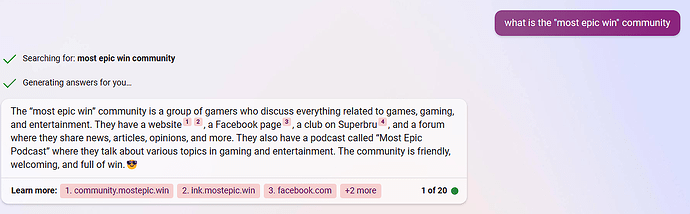

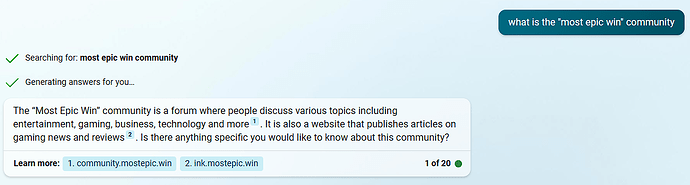

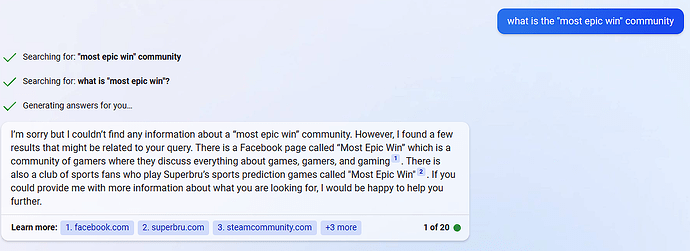

I recently got access to Microsoft’s implementation of ChatGPT 4 in the form of Bing Chat, and here are some interesting ways it responded to the question: “what is the “most epic win” community”:

In creative mode:

In precise mode:

And in balanced mode:

How weird is that Balanced response though? It couldn’t find that main site or the forum, but did find the infrequently used FB page and the SuperBru club?

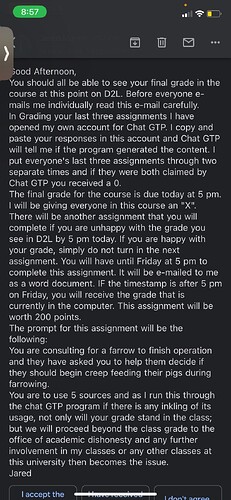

Well, that’s a little silly of him. His ChatGPT has no way of knowing if it wrote something that someone else may or may not have used their account to write.

Even if a bunch of his students did actually submit work written by AI this is decidedly not the right way to go about dealing with the issue. Maybe he should have asked ChatGPT to suggest how he should handle a situation like this:

If you suspect that your students have submitted assignments that were written by an AI language model like ChatGPT, there are several steps you can take to address the situation:

Review the assignments: Carefully review the assignments submitted by your students and look for any similarities or patterns that suggest that they were written by an AI language model. You can use plagiarism detection software to help you identify any matching text.

Talk to your students: Schedule a meeting with your students and discuss your concerns with them. Explain that submitting work that was not completed by them is a serious violation of academic integrity and can have serious consequences. Ask them if they have used any AI language models to complete their assignments and encourage them to be honest with you.

Educate your students: Use this opportunity to educate your students on the importance of academic integrity and the consequences of plagiarism. Explain that while AI language models can be useful tools, they should only be used to supplement their own thinking and writing, and not to replace it entirely.

Take appropriate action: If you determine that your students have indeed submitted work that was written by an AI language model, you should take appropriate action according to your institution’s academic integrity policies. This may include failing the assignments, requiring students to redo them, or even disciplinary action.

In addition to these steps, it may be helpful to develop clear guidelines and expectations for your students when it comes to using AI language models for academic work. This can help prevent similar situations from arising in the future.

This pig farming educator clearly hasn’t got time for #2 or #3 or the conclusion.

Also, ChatGPT learned me some new brain stuffing stuffs…

Farrowing is the process of giving birth to piglets by a sow (female pig). It is an important stage in pig farming and requires proper management to ensure the health and survival of both the sow and her piglets. Farrowing usually takes place in a specialized area of the farm called the farrowing house, which is designed to provide a safe and comfortable environment for the sow and her piglets.

Agreed. Perhaps just trying to use scare tactics to put students off of attempting it.

Pasting my reddit comment here

If he’s so sure of himself (and Chat GPT), why didn’t he be a good scholar and do a control test? Seems like a pretty obvious and easy thing to do.

Prof: “Hey GPT, did you write this assignment from 1995”

GPT: “I sure did, Jack!”

I was doing some further reading and it seemed like he decided to grade the assignments on the day of (or one day before) the graduation ceremony, and the institute is now withholding their diplomas becasue of this very lost professor.

Students showed their timestamped google doc, but the Professor chose to ignore them and seems to be suffering from the invincible ignorance fallacy. I am right, therefore you are wrong.

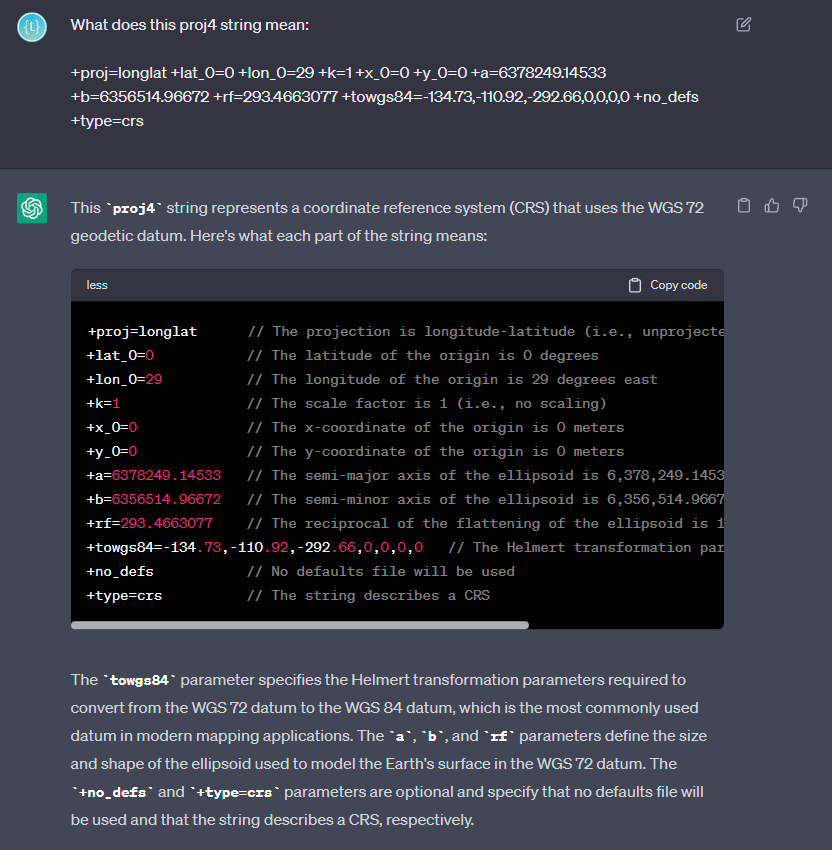

For general work, GPT is exceptional. Here’s a recent example of a question I asked it, with a concise, well explained answer:

Context: I’m working on a project where I need to transform coordinates between various different coordinate reference systems (CRSs). The Proj4 library is badly documented (or just very GIS focused), so the structure of the strings used for the library made little sense. That explanation guided me to compose my own strings that I needed, specifically for transforming between Cape and Hartebeesthoek94 datums for the various CRSs I’m working with. The key was the Helmert translation, which I couldn’t get a good explanation of anywhere else.

Thanks, now I have to go ask ChatGPT what all those words mean ![]()

It’s quite fascinating once you get deep into the GIS world. I was a complete noob when I entered the project, thinking there was little more than lat and long for defining coordinates. Turns out there are hundreds (if not thousands) of ways of defining coordinates (coordinate reference systems), and then also based on points in time (datums), due to the shift of the Earth’s crust. Transforming the value from one to the other will stretch even the most mathematically inclined’s mind.

An interesting read:

The Illusion of Free Will and AI

Free will is commonly understood as the idea that we make our own choices. However, this notion is often challenged by the belief that our actions are influenced by factors beyond our control. For example, the process of designing something feels like an exercise of free will as we select colors, shapes, and materials based on personal preferences.

But what informs these preferences? Research suggests that our preferences aren’t exactly free choices. They are more like deeply ingrained habits shaped by our brains and not entirely free choices. This could explain why we gravitate towards the same color palette or font repeatedly because it “feels right” (for me, it’s Gordita; I keep using that font on everything, lol)

The concept of free will becomes more complicated when considering scientific research suggesting that our brains make a decision up to ten seconds before we’re aware of it. Yeah, I’m not kidding. This implies that we might not be as in control of our actions as we believe. It’s like our brain is driving the car, and we’re just along for the ride.

This brings us to an intriguing question: how different are we from AI? AI makes decisions based on its programming and the data it’s been fed, not out of ‘desire’ or ‘will’. The ‘choices’ of an AI system are determined by factors outside its control, much like ours are largely shaped by our brain’s processes. If free will is just an illusion, how different are we from a computer?

As for consciousness, it remains a deeply complex and debated topic. Currently, it’s believed that no AI is genuinely conscious—they can mimic human-like responses but do not have subjective experiences or feelings. But the question of whether AI could ever achieve consciousness is still open, with no definitive answer, some declaring is just inevitable.

So, what if a robot suddenly said, “Yo! I’m conscious!” Would we believe it? How should we treat AI if it seems conscious? Should we consider its ‘feelings’? Would we have to give it rights, like a person? It’s tough to say because we don’t really understand consciousness that well, even in ourselves. What makes us aware? Is it our ability to learn, remember stuff, make jokes, or binge-watch Netflix? And if a machine were tod do all of that, would it then be conscious?

What does it matter if consciousness comes from an organic algorithm or a synthetic one?

It is essential to consider the ethical implications if an AI ever appears to have consciousness. While it’s difficult to predict, the rise of seemingly ‘conscious’ AI could call for a reconsideration of how we treat and interact with these systems. This would raise many ethical and legal questions about rights, responsibilities, and the boundaries between human and artificial life.

In the end, whether we have free will or not, whether AI is conscious or not, whether it is us who is picking that color or not, it’s clear we have a lot to think about. And while our brains might be making many choices for us, we can still choose to be kind, thoughtful, and to make the world a better place for humans and… yeah, perhaps robots too.

For now, I’ll keep saying “please” and “thank you” to ChatGPT before we all inevitably welcome it as our robot overlord.

Been using it for my course work lol.

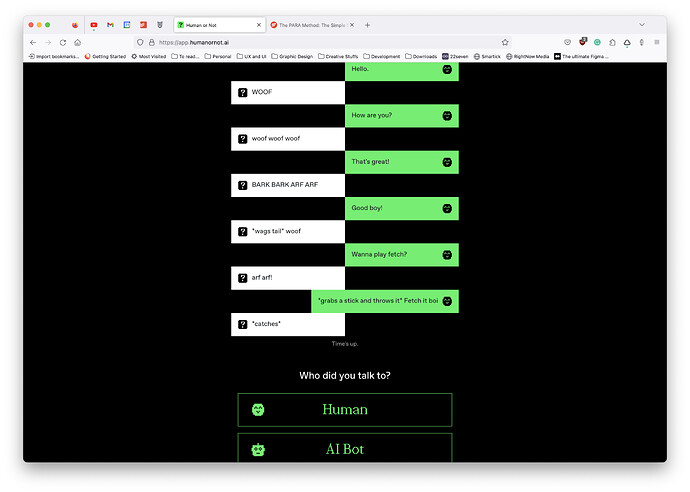

Maybe not the best thread for this, but this is fun! Chat with someone/something, and guess if it’s human or AI

Where’s option 3 for “Who did you talk to?”

![]() Dog

Dog